Value-based health care: just reinventing the cost-effectiveness wheel?

If you follow me on Twitter you will know that I have an ongoing curiosity for value-based healthcare (VBHC). VBHC is a relatively new approach to making decisions about health service provision. It is currently in vogue with local decision makers, largely, it seems to me, because of the advocacy of Sir Muir Gray’s research group on VBHC.

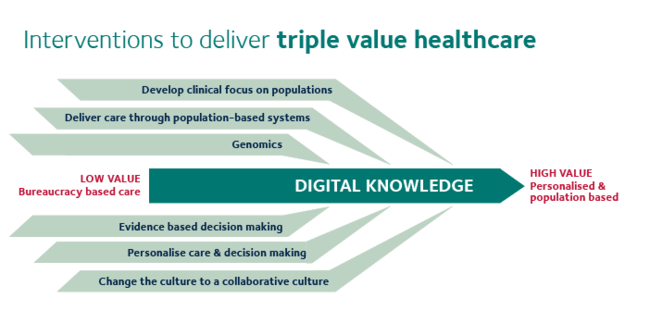

The VBHC Research Group’s illustration of the “Triple Value” framework

Because it is concerned with how to make decisions about healthcare provision, VBHC necessarily overlaps substantially with cost-effectiveness analysis, which is the approach advocated by health economists. VBHC breaks away from cost-effectiveness methods in more explicitly considering equity considerations (who benefits from an intervention, as well as just how much they do). It also discusses “personal value”, which seems to be largely about advocating for patient-centred care and shared decision making. Where it really comes into potential conflict with health economics is in the third approach: allocative value, which explicitly concerns itself with resource allocation (another word for healthcare decision-making).

A paper has recently been published on VBHC in the Journal of the Royal Society of Medicine (in the interests of full disclosure, by members of my own Department, which happens to include Gray). It’s a commentary that presents an interesting summary of the history of, and current thinking around, precision medicine, shared decision making and the increasing role of the patient in medical decision making. However, before getting into this, there is a short discussion of the VBHC framework in terms of population-level decision making (ie, allocative value, or resource allocation).

I have a couple of issues with this section, and I think it illustrates some of the ways in which VBHC has a tendency to reinvent the wheel on issues that health economics has been addressing for years.

Defining value

The first couple of paragraphs are largely common sense: population changes are reflective of changes happening at the level of individual patients, so it’s clear the two are related. The unit of analysis in any cost-effectiveness analysis is the patient; health outcomes for each individual are added up to give the population level effects. Where things get rocky is this line:

The second reason is that the value for each individual changes just like the value for the population as the investment in a service increases

What is the definition of value here? Is it patient health, and if so how do you define and measure health? Is it patient preferences? What about their family’s preferences? Should wider society get any say in what is “valuable”? Health economists have wrestled with these questions for decades. The way cost-effectiveness analysis currently does this is to define value as a combination of mortality and health-related utility (the economic term for preferences), known as the quality-adjusted life year (QALY).

This is probably the single largest issue I have with VBHC. Valuing health is, as I have written about before, a messy, uncomfortable process, and so far much of VBHC seems to gloss over this.

Linear harms and benefits

In Explorations in Quality Assessment and Monitoring, Donabedian described not only the concept of structure, process and outcome but also his ‘unifying model of benefit, risk and cost’. The power of this model is that it quantifies for the first time the relationship between resources invested in healthcare and the amount of value obtained from that level of investment.

I haven’t been able to access the book the authors cite here, although I did manage to find a 1966 review of the same name. This review is an exploration of the methods and challenges associated with identifying and measuring outcomes in healthcare, and makes no mention of cost at all.

In fact there have been numerous attempts to quantify the relationship between health investments and health outcomes, both theoretical (beginning with the Arrow paper, in 1963, and developed by the Grossman model) and empirical, using cost-effectiveness analysis and health econometrics. The most famous of the latter is probably the RAND health insurance study, which allocated 5809 American participants to different levels of health insurance coverage and measured their health outcomes over 8 years, from 1974 - 1982. Besides this, cost-effectiveness analyses in one form or another have been published since 1968, and the earliest record of a study that quantifies the relationship between investment and health outcomes that the health economists of Twitter could think of is actually from 1927.

While not the first, Earp’s 1927 study CORRELATION OF INFANT SALVAGE WITH NURSING EFFORT is a classic. No only does it estimate and apply a regression model, but also has a discussion of causality including use of the term “spurious correlation”. https://t.co/iMJropsrUN pic.twitter.com/f5rbX1rvXs

— Philip M Clarke (@pmc868) March 4, 2018

The paper continues:

Donabedian showed that as healthcare resources are increased, benefit increases initially, but the increase then flattens off, illustrating what some people have called the law of diminishing returns. Importantly – and this is often overlooked – the amount of harm done does not diminish as resources invested. For each unit increase in resource, there is a unit increase in the number of people treated and consequently a unit increase in the amount of harm done. In fact, there may be a progressive increase in the amount of harm done if, with each unit increase in the availability of care, patients who are less fit and more at risk of harm are covered by the service.

The idea of diminishing returns on an investment is an economic concept that makes intuitive sense. This does not necessarily mean it is universally true, though. It provides a useful starting assumption in the absence of evidence on how effective a treatment actually is in different populations, but it is easy enough to think of situations where it may not apply. For example, drugs that are more experimental may be given more readily to patients who have few other treatment options, even though it may later turn out that the drug is more effective in patients with a less severe form of disease.

Harm occurs in all health services, even those that are of the highest quality, as an inevitable consequence of the risks associated with the act of interventions such as X-rays, drugs and anaesthetics. As a consequence, there may come a point at which the investment of additional resources will lead to a reduction in the net benefit, calculated by subtracting the harm from the benefit, and there comes a point beyond which additional investment reduces the value derived from the resources. He called this the point of optimality

Harms may be linear, but harms such as adverse events are not necessarily random, and therefore may not increase linearly with the number of people treated. The relationship between patient characteristics and likelihood of experiencing harms may also correlate with how effective the treatment is. For example, patients who experience more adverse events because they are frail might also benefit less from the treatment.

That said, the idea of balancing benefits and harms is integral to cost-effectiveness analysis, and nothing here is particularly counter to that. Many treatments will follow this shape of diminishing benefits and increasing costs and harms.

Opportunity cost

The more controversial point is the “point of optimality”. On the diagram below (Figure 1 in the paper), this is the point, supposedly, when net benefits (benefits minus harms) are at their highest. This is the same calculation used in cost-effectiveness analysis, only with costs in place of (and encompassing) harms. Here costs are listed on the x axis, but don’t come into the net benefit equation at all. It is just taken as a given that increasing costs linearly increase both benefits and harms.

This diagram pays lip service to costs, but in fact you could remove them entirely and nothing would change in this figure: even if all treatments were free, there is a point where the total health gains are reduced because of increasing harms. This is the point where we should stop investing.

Cost-effectiveness analysis considers all of this, but also considers the opportunity cost: the fact that money not spent on this would be spent on something else. The “point of optimality” in a cost-effectiveness analysis is one in which net benefits are highest when taking into account what benefits would have been acquired by spending that money differently. Specifically, the costs are considered in terms of the benefits that would have been gained if that money had been spent on other treatments in the health system. If this opportunity cost is not considered, then the only constraint on spending is harms of testing and treating.

Harms from overdiagnosis and ineffective treatments are certainly a problem in healthcare, but if you allocated resources on the basis of minimising clinical harms, ignoring costs, you would very quickly run out of money.

The irony of allocative efficiency in research

VBHC has clearly captured the imagination of many decision makers. The breadth of its scope, opening up the possibility of considering decision making in healthcare through several lenses, is interesting.

Unfortunately these issues - ignoring opportunity cost, not defining value, and assuming the relationship between costs and outcomes is clear-cut and predictable - would be enough to send any cost-effectiveness study home from peer review with a note. VBHC must engage with the past 50 years of health economics research, or risk adding very little new to the decision-maker’s toolbox.

Ironically, while VBHC advocates continue to reinvent this wheel they absorb resources that could be better spent elsewhere, generating new evidence that might actually benefit patients. I’d hate to see the enthusiasm and influence of this field succumb entirely to allocative inefficiency.